The hidden cost of scaling AI: infrastructure, energy, and the push for liquid cooling.

Updated

January 8, 2026 6:31 PM

The inside of a data centre, with rows of server racks. PHOTO: FREEPIK

As artificial intelligence models grow larger and more demanding, the quiet pressure point isn’t the algorithms themselves—it’s the AI infrastructure that has to run them. Training and deploying modern AI models now requires enormous amounts of computing power, which creates a different kind of challenge: heat, energy use and space inside data centers. This is the context in which Supermicro and NVIDIA’s collaboration on AI infrastructure begins to matter.

Supermicro designs and builds large-scale computing systems for data centers. It has now expanded its support for NVIDIA’s Blackwell generation of AI chips with new liquid-cooled server platforms built around the NVIDIA HGX B300. The announcement isn’t just about faster hardware. It reflects a broader effort to rethink how AI data center infrastructure is built as facilities strain under rising power and cooling demands.

At a basic level, the systems are designed to pack more AI chips into less space while using less energy to keep them running. Instead of relying mainly on air cooling—fans, chillers and large amounts of electricity, these liquid-cooled AI servers circulate liquid directly across critical components. That approach removes heat more efficiently, allowing servers to run denser AI workloads without overheating or wasting energy.

Why does that matter outside a data center? Because AI doesn’t scale in isolation. As models become more complex, the cost of running them rises quickly, not just in hardware budgets, but in electricity use, water consumption and physical footprint. Traditional air-cooling methods are increasingly becoming a bottleneck, limiting how far AI systems can grow before energy and infrastructure costs spiral.

This is where the Supermicro–NVIDIA partnership fits in. NVIDIA supplies the computing engines—the Blackwell-based GPUs designed to handle massive AI workloads. Supermicro focuses on how those chips are deployed in the real world: how many GPUs can fit in a rack, how they are cooled, how quickly systems can be assembled and how reliably they can operate at scale in modern data centers. Together, the goal is to make high-density AI computing more practical, not just more powerful.

The new liquid-cooled designs are aimed at hyperscale data centers and so-called AI factories—facilities built specifically to train and run large AI models continuously. By increasing GPU density per rack and removing most of the heat through liquid cooling, these systems aim to ease a growing tension in the AI boom: the need for more computers without an equally dramatic rise in energy waste.

Just as important is speed. Large organizations don’t want to spend months stitching together custom AI infrastructure. Supermicro’s approach packages compute, networking and cooling into pre-validated data center building blocks that can be deployed faster. In a world where AI capabilities are advancing rapidly, time to deployment can matter as much as raw performance.

Stepping back, this development says less about one product launch and more about a shift in priorities across the AI industry. The next phase of AI growth isn’t only about smarter models—it’s about whether the physical infrastructure powering AI can scale responsibly. Efficiency, power use and sustainability are becoming as critical as speed.

Keep Reading

How a Korean biotech startup is using AI to move drug discovery from trial-and-error to precision design

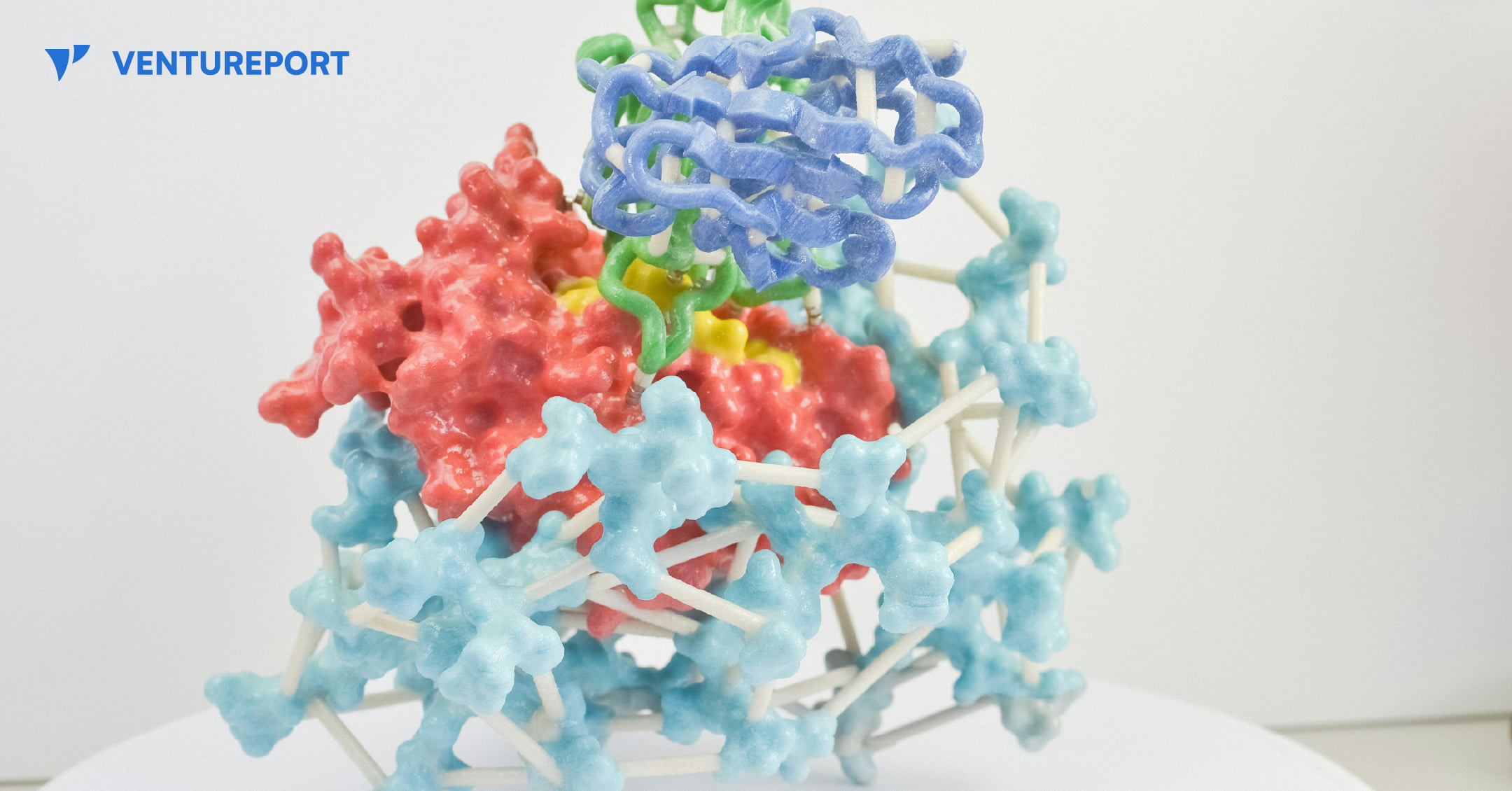

A close up of a protein structure model. PHOTO: UNSPLASH

For decades, drug discovery has relied on trial and error, with scientists testing thousands of molecules to find one that works. Galux, a South Korean biotech startup, is changing that by using AI to design proteins from scratch. This method, called “de novo” design, makes it possible to build precise new therapies instead of searching through existing ones.

The company recently announced a US$29 million Series B funding round, bringing its total capital to US$47 million.This significant investment attracted a substantial roster of institutional backers, including the Korea Development Bank (KDB), Yuanta Investment, SL Investment and NCORE Ventures. These firms joined existing investors such as InterVest, DAYLI Partners and PATHWAY Investment, as well as new participants including SneakPeek Investments, Korea Investment & Securities and Mirae Asset Securities.

At the core of the company’s work is a platform called GaluxDesign. Unlike many AI tools that only predict how existing proteins fold, this system uses deep learning and physics to create entirely new therapeutic antibodies. This “from scratch” approach lets the team go after so-called “undruggable” proteins. These are targets that traditional small-molecule drugs can’t reach because they lack clear binding pockets. By designing proteins to fit these complex shapes, Galux aims to unlock treatments that have stayed out of reach for decades. And that’s exactly why investors are paying attention.

The pharmaceutical industry is actively looking for faster and more efficient ways to develop new drugs, and Galux is built for exactly that. The company connects its AI platform directly to its own wet lab, where designs can be tested in real time. Each result feeds straight back into the system, sharpening the next round of models. This continuous loop speeds up discovery and improves precision at every step. It’s also why partners like Celltrion, LG Chem and Boehringer Ingelheim are already working with Galux.

Galux is no longer just trying to make drugs that stick to a target. The company now wants its AI to design medicines that actually work in the body and can be made at scale. In simple terms, a drug has to do more than bind to a disease—it must be stable, safe and strong enough to change how the illness behaves. Galux is moving into tougher targets such as ion channels and GPCRs. These play key roles in heart function and sensory signals. Ultimately, the goal is to show that AI-driven design can turn complex biology into real treatments. And instead of hunting blindly for a solution, the team is building exactly what they need.