How a Korean biotech startup is using AI to move drug discovery from trial-and-error to precision design

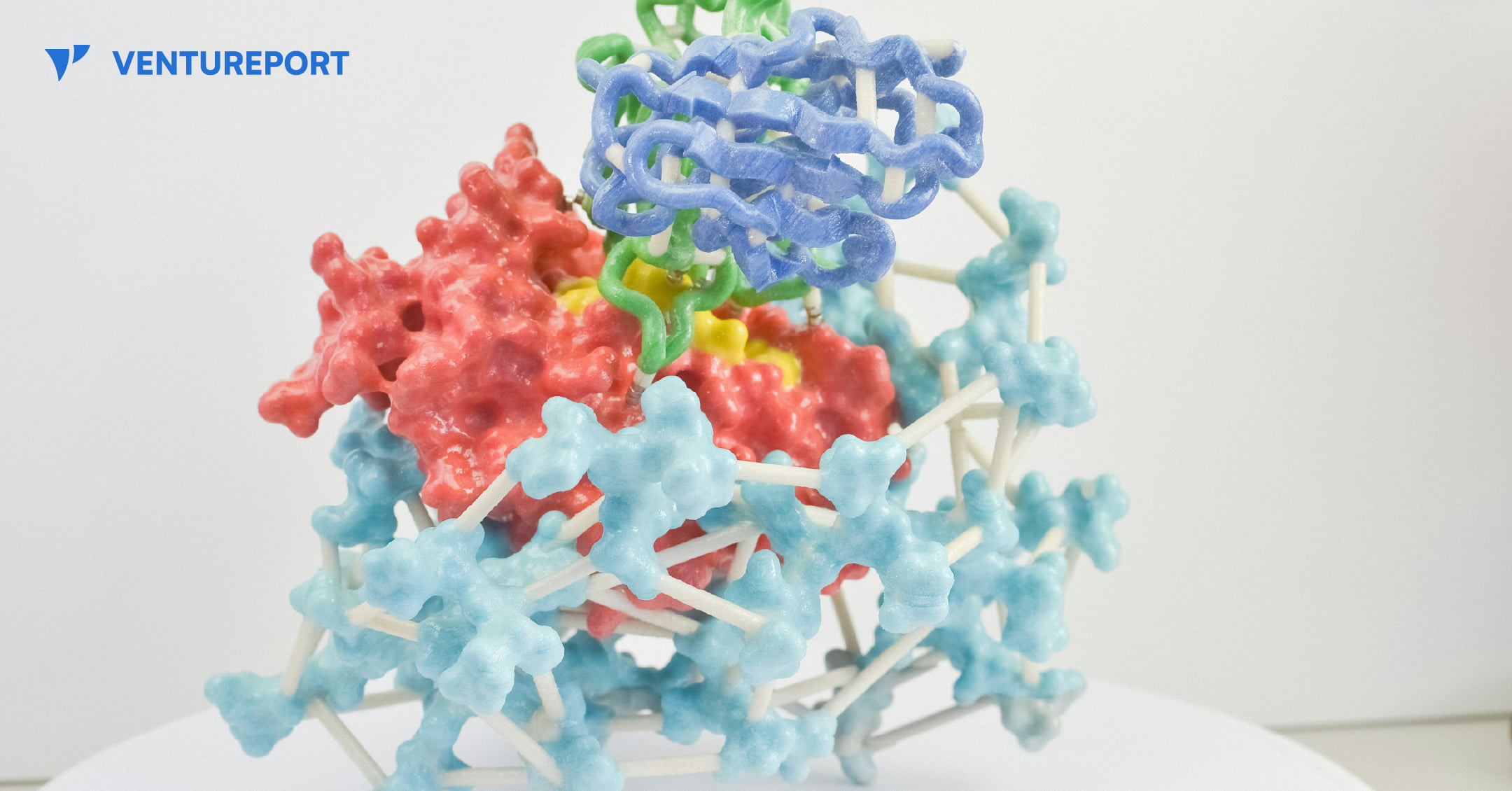

A close up of a protein structure model. PHOTO: UNSPLASH

For decades, drug discovery has relied on trial and error, with scientists testing thousands of molecules to find one that works. Galux, a South Korean biotech startup, is changing that by using AI to design proteins from scratch. This method, called “de novo” design, makes it possible to build precise new therapies instead of searching through existing ones.

The company recently announced a US$29 million Series B funding round, bringing its total capital to US$47 million.This significant investment attracted a substantial roster of institutional backers, including the Korea Development Bank (KDB), Yuanta Investment, SL Investment and NCORE Ventures. These firms joined existing investors such as InterVest, DAYLI Partners and PATHWAY Investment, as well as new participants including SneakPeek Investments, Korea Investment & Securities and Mirae Asset Securities.

At the core of the company’s work is a platform called GaluxDesign. Unlike many AI tools that only predict how existing proteins fold, this system uses deep learning and physics to create entirely new therapeutic antibodies. This “from scratch” approach lets the team go after so-called “undruggable” proteins. These are targets that traditional small-molecule drugs can’t reach because they lack clear binding pockets. By designing proteins to fit these complex shapes, Galux aims to unlock treatments that have stayed out of reach for decades. And that’s exactly why investors are paying attention.

The pharmaceutical industry is actively looking for faster and more efficient ways to develop new drugs, and Galux is built for exactly that. The company connects its AI platform directly to its own wet lab, where designs can be tested in real time. Each result feeds straight back into the system, sharpening the next round of models. This continuous loop speeds up discovery and improves precision at every step. It’s also why partners like Celltrion, LG Chem and Boehringer Ingelheim are already working with Galux.

Galux is no longer just trying to make drugs that stick to a target. The company now wants its AI to design medicines that actually work in the body and can be made at scale. In simple terms, a drug has to do more than bind to a disease—it must be stable, safe and strong enough to change how the illness behaves. Galux is moving into tougher targets such as ion channels and GPCRs. These play key roles in heart function and sensory signals. Ultimately, the goal is to show that AI-driven design can turn complex biology into real treatments. And instead of hunting blindly for a solution, the team is building exactly what they need.

Keep Reading

Structured AI interviews and human judgment combine to address the global talent shortage

Updated

March 4, 2026 4:46 PM

ManpowerGroup World Headquarters in Milwaukee. PHOTO: ADOBE STOCK

As hiring pressures mount across global markets, ManpowerGroup is turning to technology to strengthen how it connects people to work. The workforce solutions major has announced a global partnership with Hubert, a startup focused on AI-driven structured interviews. The aim is simple: make hiring faster and fairer, without removing the human touch.

ManpowerGroup has spent decades operating at the center of the global labor market. The company works with employers across industries to fill roles, manage workforce planning and build talent pipelines. With millions of placements each year, it has a clear view of how strained hiring has become. A large share of employers today report difficulty finding skilled talent. At the same time, candidates expect more transparency, quicker feedback and flexibility in how they engage with employers.

Hubert enters this picture as a specialist in structured digital interviewing. The startup has built tools that allow candidates to complete interviews online, at any time, while being assessed against consistent criteria. Instead of relying on informal screening calls or resume filters, its system focuses on standardized questions tied directly to job requirements. The idea is to bring more consistency to early-stage hiring.

The partnership brings these capabilities into ManpowerGroup’s global operations. AI-powered interviews will now support the first stage of screening, helping recruiters identify qualified candidates earlier in the process. This does not replace recruiters. Final decisions and contextual judgment remain with experienced hiring professionals. What changes is the speed and structure of the initial assessment.

For employers, this could mean earlier visibility into job-ready talent and less time spent on manual screening. For candidates, it offers more flexibility. A significant portion of interviews on Hubert’s platform are completed outside regular office hours, allowing applicants to engage when it suits them. That flexibility can make a difference in competitive labor markets where timing matters.

The collaboration is also positioned as a step toward reducing bias. By evaluating each candidate against the same transparent standards, the process becomes more consistent. While no system can remove bias entirely, structured assessments can reduce the variability that often comes with unstructured interviews.

At its core, the partnership addresses a gap many large organizations are facing. They need scale and speed, but they cannot afford to lose the human judgment that good hiring depends on. Manual processes are too slow. Fully automated systems can feel impersonal and risky. ManpowerGroup’s approach suggests a middle path, where technology handles repetition and structure and recruiters focus on potential and fit.

The move also reflects a broader shift in the workforce industry. AI is no longer being tested on the sidelines. It is being built into the foundation of hiring operations. For established players like ManpowerGroup, the challenge is not whether to adopt AI, but how to do so responsibly and at scale.

By working with Hubert, the company is signaling that the future of recruitment will likely blend structured digital tools with human expertise. In a market defined by talent shortages and rising expectations, that balance may prove critical.