HKU professor apologizes after PhD student’s AI-assisted paper cites fabricated sources.

Updated

January 8, 2026 6:33 PM

.jpg)

The University of Hong Kong in Pok Fu Lam, Hong Kong Island. PHOTO: ADOBE STOCK

It’s no surprise that artificial intelligence, while remarkably capable, can also go astray—spinning convincing but entirely fabricated narratives. From politics to academia, AI’s “hallucinations” have repeatedly shown how powerful technology can go off-script when left unchecked.

Take Grok-2, for instance. In July 2024, the chatbot misled users about ballot deadlines in several U.S. states, just days after President Joe Biden dropped his re-election bid against former President Donald Trump. A year earlier, a U.S. lawyer found himself in court for relying on ChatGPT to draft a legal brief—only to discover that the AI tool had invented entire cases, citations and judicial opinions. And now, the academic world has its own cautionary tale.

Recently, a journal paper from the Department of Social Work and Social Administration at the University of Hong Kong was found to contain fabricated citations—sources apparently created by AI. The paper, titled “Forty Years of Fertility Transition in Hong Kong,” analyzed the decline in Hong Kong’s fertility rate over the past four decades. Authored by doctoral student Yiming Bai, along with Yip Siu-fai, Vice Dean of the Faculty of Social Sciences and other university officials, the study identified falling marriage rates as a key driver behind the city’s shrinking birth rate. The authors recommended structural reforms to make Hong Kong’s social and work environment more family-friendly.

But the credibility of the paper came into question when inconsistencies surfaced among its references. Out of 61 cited works, some included DOI (Digital Object Identifier) links that led to dead ends, displaying “DOI Not Found.” Others claimed to originate from academic journals, yet searches yielded no such publications.

Speaking to HK01, Yip acknowledged that his student had used AI tools to organize the citations but failed to verify the accuracy of the generated references. “As the corresponding author, I bear responsibility”, Yip said, apologizing for the damage caused to the University of Hong Kong and the journal’s reputation. He clarified that the paper itself had undergone two rounds of verification and that its content was not fabricated—only the citations had been mishandled.

Yip has since contacted the journal’s editor, who accepted his explanation and agreed to re-upload a corrected version in the coming days. A formal notice addressing the issue will also be released. Yip said he would personally review each citation “piece by piece” to ensure no errors remain.

As for the student involved, Yip described her as a diligent and high-performing researcher who made an honest mistake in her first attempt at using AI for academic assistance. Rather than penalize her, Yip chose a more constructive approach, urging her to take a course on how to use AI tools responsibly in academic research.

Ultimately, in an age where generative AI can produce everything from essays to legal arguments, there are two lessons to take away from this episode. First, AI is a powerful assistant, but only that. The final judgment must always rest with us. No matter how seamless the output seems, cross-checking and verifying information remain essential. Second, as AI becomes integral to academic and professional life, institutions must equip students and employees with the skills to use it responsibly. Training and mentorship are no longer optional; they’re the foundation for using AI to enhance, not undermine, human work.

Because in this age of intelligent machines, staying relevant isn’t about replacing human judgment with AI, it’s about learning how to work alongside it.

Keep Reading

How a Korean biotech startup is using AI to move drug discovery from trial-and-error to precision design

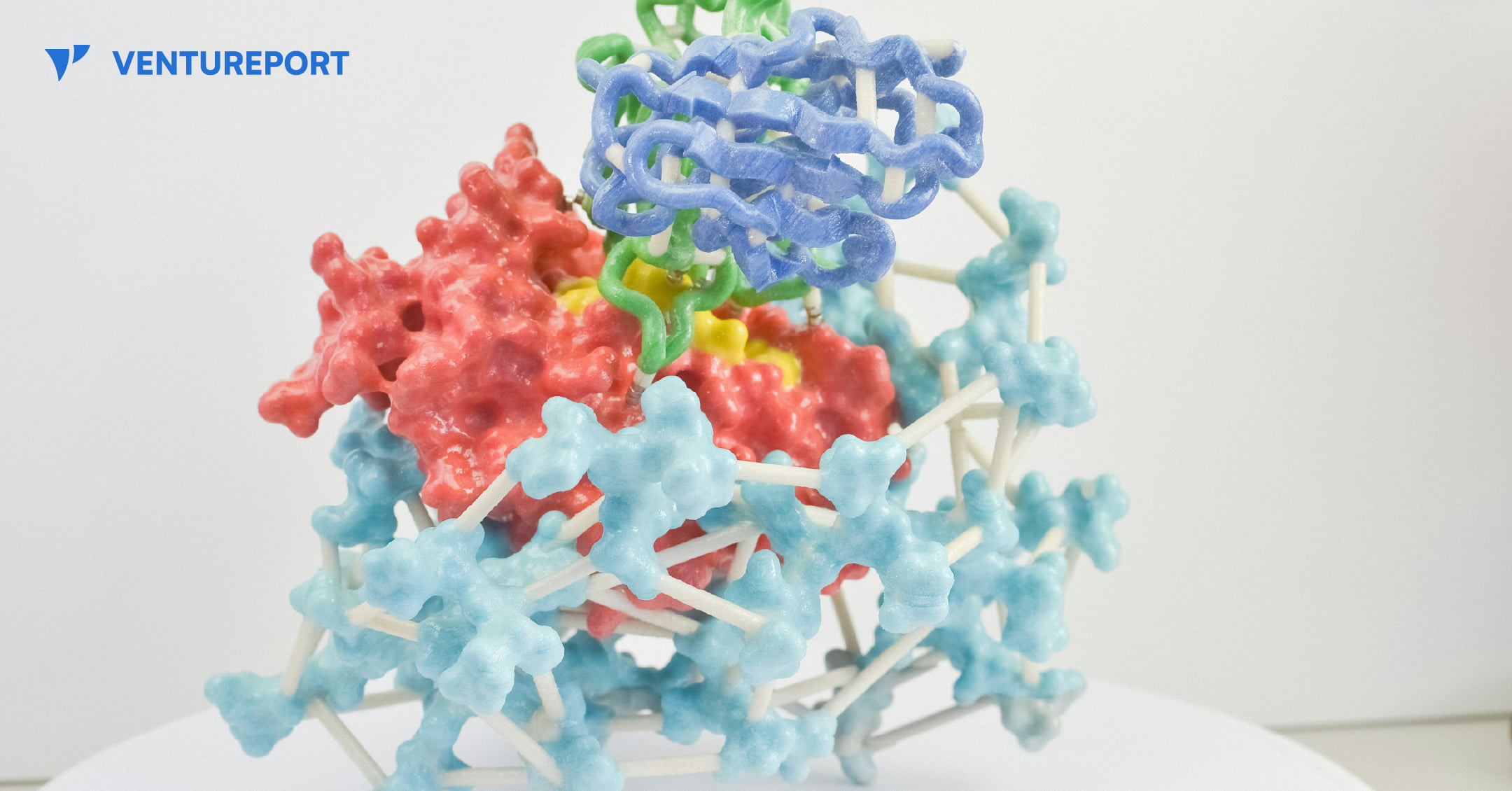

A close up of a protein structure model. PHOTO: UNSPLASH

For decades, drug discovery has relied on trial and error, with scientists testing thousands of molecules to find one that works. Galux, a South Korean biotech startup, is changing that by using AI to design proteins from scratch. This method, called “de novo” design, makes it possible to build precise new therapies instead of searching through existing ones.

The company recently announced a US$29 million Series B funding round, bringing its total capital to US$47 million.This significant investment attracted a substantial roster of institutional backers, including the Korea Development Bank (KDB), Yuanta Investment, SL Investment and NCORE Ventures. These firms joined existing investors such as InterVest, DAYLI Partners and PATHWAY Investment, as well as new participants including SneakPeek Investments, Korea Investment & Securities and Mirae Asset Securities.

At the core of the company’s work is a platform called GaluxDesign. Unlike many AI tools that only predict how existing proteins fold, this system uses deep learning and physics to create entirely new therapeutic antibodies. This “from scratch” approach lets the team go after so-called “undruggable” proteins. These are targets that traditional small-molecule drugs can’t reach because they lack clear binding pockets. By designing proteins to fit these complex shapes, Galux aims to unlock treatments that have stayed out of reach for decades. And that’s exactly why investors are paying attention.

The pharmaceutical industry is actively looking for faster and more efficient ways to develop new drugs, and Galux is built for exactly that. The company connects its AI platform directly to its own wet lab, where designs can be tested in real time. Each result feeds straight back into the system, sharpening the next round of models. This continuous loop speeds up discovery and improves precision at every step. It’s also why partners like Celltrion, LG Chem and Boehringer Ingelheim are already working with Galux.

Galux is no longer just trying to make drugs that stick to a target. The company now wants its AI to design medicines that actually work in the body and can be made at scale. In simple terms, a drug has to do more than bind to a disease—it must be stable, safe and strong enough to change how the illness behaves. Galux is moving into tougher targets such as ion channels and GPCRs. These play key roles in heart function and sensory signals. Ultimately, the goal is to show that AI-driven design can turn complex biology into real treatments. And instead of hunting blindly for a solution, the team is building exactly what they need.