The hidden cost of scaling AI: infrastructure, energy, and the push for liquid cooling.

Updated

January 8, 2026 6:31 PM

The inside of a data centre, with rows of server racks. PHOTO: FREEPIK

As artificial intelligence models grow larger and more demanding, the quiet pressure point isn’t the algorithms themselves—it’s the AI infrastructure that has to run them. Training and deploying modern AI models now requires enormous amounts of computing power, which creates a different kind of challenge: heat, energy use and space inside data centers. This is the context in which Supermicro and NVIDIA’s collaboration on AI infrastructure begins to matter.

Supermicro designs and builds large-scale computing systems for data centers. It has now expanded its support for NVIDIA’s Blackwell generation of AI chips with new liquid-cooled server platforms built around the NVIDIA HGX B300. The announcement isn’t just about faster hardware. It reflects a broader effort to rethink how AI data center infrastructure is built as facilities strain under rising power and cooling demands.

At a basic level, the systems are designed to pack more AI chips into less space while using less energy to keep them running. Instead of relying mainly on air cooling—fans, chillers and large amounts of electricity, these liquid-cooled AI servers circulate liquid directly across critical components. That approach removes heat more efficiently, allowing servers to run denser AI workloads without overheating or wasting energy.

Why does that matter outside a data center? Because AI doesn’t scale in isolation. As models become more complex, the cost of running them rises quickly, not just in hardware budgets, but in electricity use, water consumption and physical footprint. Traditional air-cooling methods are increasingly becoming a bottleneck, limiting how far AI systems can grow before energy and infrastructure costs spiral.

This is where the Supermicro–NVIDIA partnership fits in. NVIDIA supplies the computing engines—the Blackwell-based GPUs designed to handle massive AI workloads. Supermicro focuses on how those chips are deployed in the real world: how many GPUs can fit in a rack, how they are cooled, how quickly systems can be assembled and how reliably they can operate at scale in modern data centers. Together, the goal is to make high-density AI computing more practical, not just more powerful.

The new liquid-cooled designs are aimed at hyperscale data centers and so-called AI factories—facilities built specifically to train and run large AI models continuously. By increasing GPU density per rack and removing most of the heat through liquid cooling, these systems aim to ease a growing tension in the AI boom: the need for more computers without an equally dramatic rise in energy waste.

Just as important is speed. Large organizations don’t want to spend months stitching together custom AI infrastructure. Supermicro’s approach packages compute, networking and cooling into pre-validated data center building blocks that can be deployed faster. In a world where AI capabilities are advancing rapidly, time to deployment can matter as much as raw performance.

Stepping back, this development says less about one product launch and more about a shift in priorities across the AI industry. The next phase of AI growth isn’t only about smarter models—it’s about whether the physical infrastructure powering AI can scale responsibly. Efficiency, power use and sustainability are becoming as critical as speed.

Keep Reading

A new safety layer aims to help robots sense people in real time without slowing production

Updated

February 13, 2026 10:44 AM

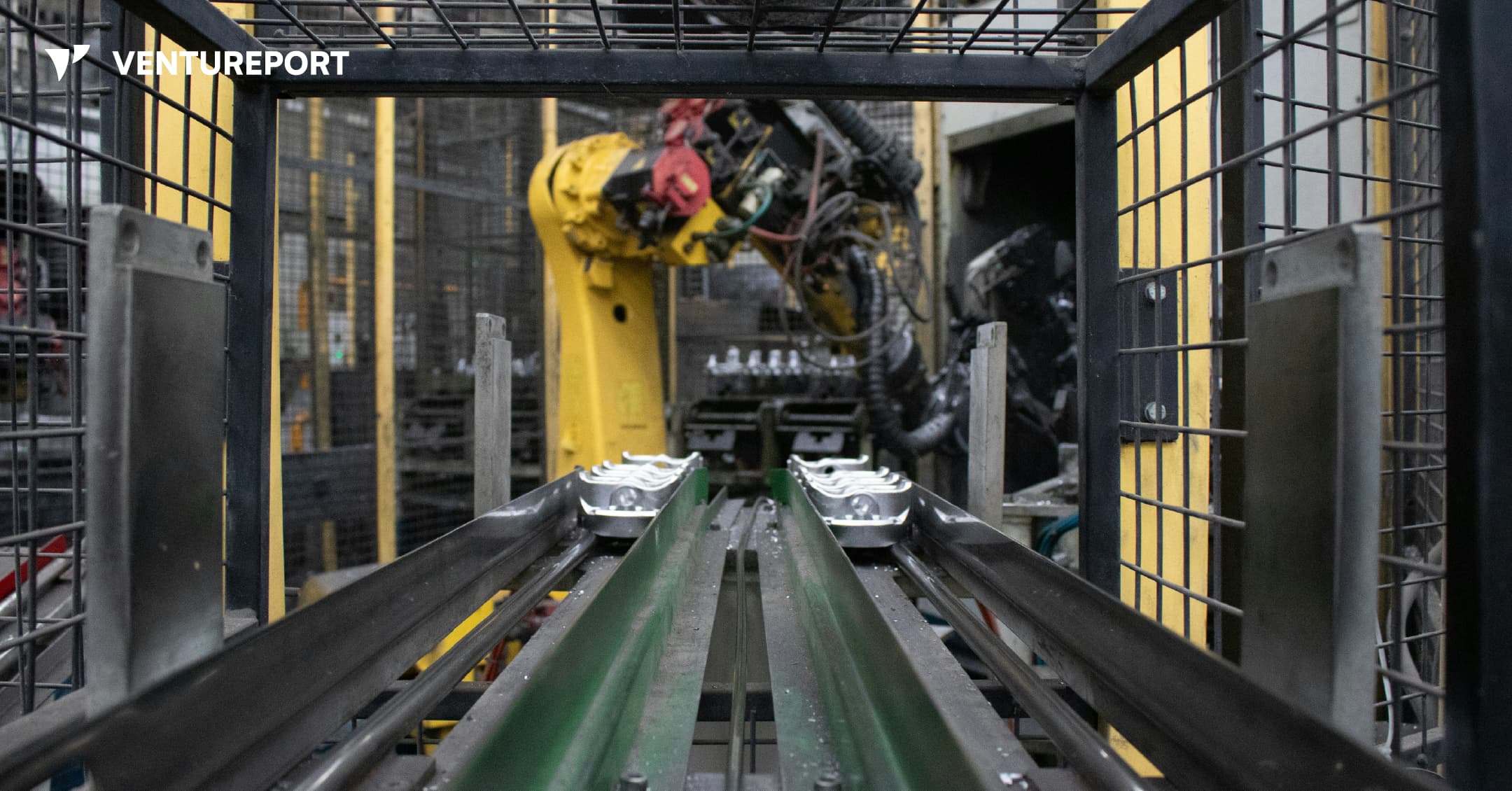

An industrial robot in a factory. PHOTO: UNSPLASH

Algorized has raised US$13 million in a Series A round to advance its AI-powered safety and sensing technology for factories and warehouses. The California- and Switzerland-based robotics startup says the funding will help expand a system designed to transform how robots interact with people. The round was led by Run Ventures, with participation from the Amazon Industrial Innovation Fund and Acrobator Ventures, alongside continued backing from existing investors.

At its core, Algorized is building what it calls an intelligence layer for “physical AI” — industrial robots and autonomous machines that function in real-world settings such as factories and warehouses. While generative AI has transformed software and digital workflows, bringing AI into physical environments presents a different challenge. In these settings, machines must not only complete tasks efficiently but also move safely around human workers.

This is where a clear gap exists. Today, most industrial robots rely on camera-based monitoring systems or predefined safety zones. For instance, when a worker steps into a marked area near a robotic arm, the system is programmed to slow down or stop the machine completely. This approach reduces the risk of accidents. However, it also means production lines can pause frequently, even when there is no immediate danger. In high-speed manufacturing environments, those repeated slowdowns can add up to significant productivity losses.

Algorized’s technology is designed to reduce that trade-off between safety and efficiency. Instead of relying solely on cameras, the company utilizes wireless signals — including Ultra-Wideband (UWB), mmWave, and Wi-Fi — to detect movement and human presence. By analysing small changes in these radio signals, the system can detect motion and breathing patterns in a space. This helps machines determine where people are and how they are moving, even in conditions where cameras may struggle, such as poor lighting, dust or visual obstruction.

Importantly, this data is processed locally at the facility itself — not sent to a remote cloud server for analysis. In practical terms, this means decisions are made on-site, within milliseconds. Reducing this delay, or latency, allows robots to adjust their movements immediately instead of defaulting to a full stop. The aim is to create machines that can respond smoothly and continuously, rather than reacting in a binary stop-or-go manner.

With the new funding, Algorized plans to scale commercial deployments of its platform, known as the Predictive Safety Engine. The company will also invest in refining its intent-recognition models, which are designed to anticipate how humans are likely to move within a workspace. In parallel, it intends to expand its engineering and support teams across Europe and the United States. These efforts build on earlier public demonstrations and ongoing collaborations with manufacturing partners, particularly in the automotive and industrial sectors.

For investors, the appeal goes beyond safety compliance. As factories become more automated, even small improvements in uptime and workflow continuity can translate into meaningful financial gains. Because Algorized’s system works with existing wireless infrastructure, manufacturers may be able to upgrade machine awareness without overhauling their entire hardware setup.

More broadly, the company is addressing a structural limitation in industrial automation. Robotics has advanced rapidly in precision and power, yet human-robot collaboration is still governed by rigid safety systems that prioritise stopping over adapting. By combining wireless sensing with edge-based AI models, Algorized is attempting to give machines a more continuous awareness of their surroundings from the start.