CES 2026 and the move toward wearable robots you don’t wear all day.

Updated

January 28, 2026 5:53 PM

The π6 exoskeleton from VIGX. PHOTO: VIGX

CES 2026 highlighted how robotics is taking many different forms. VIGX, a wearable robotics company, used the event to introduce the π6, a portable exoskeleton robot designed to be carried and worn only when needed. Unveiled in Las Vegas, the device reflects a broader shift at CES toward robotics that move with people rather than staying fixed in industrial or clinical settings.

Exoskeletons have existed for years, most commonly in controlled environments such as factories, rehabilitation facilities and specialised research settings. In these contexts, they have tended to be large, fixed systems intended for long sessions of supervised use rather than something a person could deploy on their own.

Against that backdrop, the π6 explores a more personal and flexible approach to assistance. Instead of treating an exoskeleton as permanent equipment, it is designed to be something users carry with them and wear only when a task or situation calls for extra support.

The π6 weighs 1.9 kilograms and folds down to a size that fits into a bag. When worn, it sits around the waist and legs, providing mechanical assistance during activities such as walking, climbing or extended movement. Rather than altering how people move, the system adds controlled rotational force at key joints to reduce physical strain over time.

According to the company, the device delivers up to 800 watts of peak power and 16 Nm of rotational force. In practical terms, this means the system is designed to help users sustain effort for longer periods, especially during physically demanding activities_ by easing the body's load rather than pushing it beyond normal limits.

The π6 is designed to support users weighing between 45 kilograms and 120 kilograms and is intended for intermittent use. This reinforces its role as a wearable companion — something taken out when needed and set aside when not — rather than a device meant to be worn continuously.

Another aspect of the system is how it responds to different environments. Using onboard sensors and processing, the exoskeleton can detect changes such as slopes or uneven ground and adjust the level of assistance accordingly. This reduces the need for manual adjustments and helps maintain a consistent walking experience across varied terrain, with software fine-tuning how assistance is applied rather than directing movement itself.

The hardware design follows a similar logic. The power belt contains a detachable battery, allowing users to remove or swap it without handling the entire system. This keeps the wearable components lighter and makes the exoskeleton easier to transport. The battery can also be used as a general power source for small electronic devices, adding a layer of practicality beyond the exoskeleton’s core function.

VIGX frames its work around accessibility rather than industrial automation. “To empower ordinary people,” said founder Bob Yu, explaining why the company chose to focus on exoskeleton robotics. “VIGX is dedicated to expanding the physical limits of humans, enabling deeper outdoor adventures, making running and cycling easier and more enjoyable and allowing people to sustain their outdoor pursuits regardless of age.”

Placed within the wider context of CES, the π6 sits alongside a growing number of portable robots and wearable systems that prioritise convenience, mobility and personal use. By reducing the physical and practical barriers to wearing an exoskeleton, VIGX is testing whether assistive robotics can move beyond niche environments and into everyday life. If that experiment succeeds, wearable robots may become less about dramatic augmentation and more about quiet support — present when needed and easy to put away when not.

Keep Reading

Where Hollywood magic meets AI intelligence — Hong Kong becomes the new stage for virtual humans

Updated

February 7, 2026 2:18 PM

William Wong, Chairman and CEO of Digital Domain. PHOTO: YORKE YU

In an era where pixels and intelligence converge, few companies bridge art and science as seamlessly as Digital Domain. Founded three decades ago by visionary filmmaker James Cameron, the company built its name through cinematic wizardry—bringing to life the impossible worlds of Titanic, The Curious Case of Benjamin Button and the Marvel universe. But today, its focus has evolved far beyond Hollywood: Digital Domain is reimagining the future of AI-driven virtual humans—and it’s doing so from right here in Hong Kong.

.jpg)

“AI and visual technology are merging faster than anyone imagined,” says William Wong, Chairman and CEO of Digital Domain. “For us, the question is not whether AI will reshape entertainment—it already has. The question is how we can extend that power into everyday life.”

Though globally recognized for its work on blockbuster films and AAA games, Digital Domain’s story is also deeply connected to Asia. A Hong Kong–listed company, it operates a network of production and research centers across North America, China and India. In 2024, it announced a major milestone—setting up a new R&D hub at Hong Kong Science Park focused on advancing artificial intelligence and virtual human technologies. “Our roots are in visual storytelling, but AI is unlocking a new frontier,” Wong says. “Hong Kong has been very proactive in promoting innovation and research, and with the right partnerships, we see real potential to make this a global R&D base.”

Building on that commitment, the company plans to invest about HK$200 million over five years, assembling a team of more than 40 professional talents specializing in computer vision, machine learning and digital production. For now, the team is still growing and has room to expand. “Talent is everything,” says Wong. “We want to grow local expertise while bringing in global experience to accelerate the learning curve.”

Digital Domain’s latest chapter revolves around one of AI’s most fascinating frontiers: the creation of virtual humans.

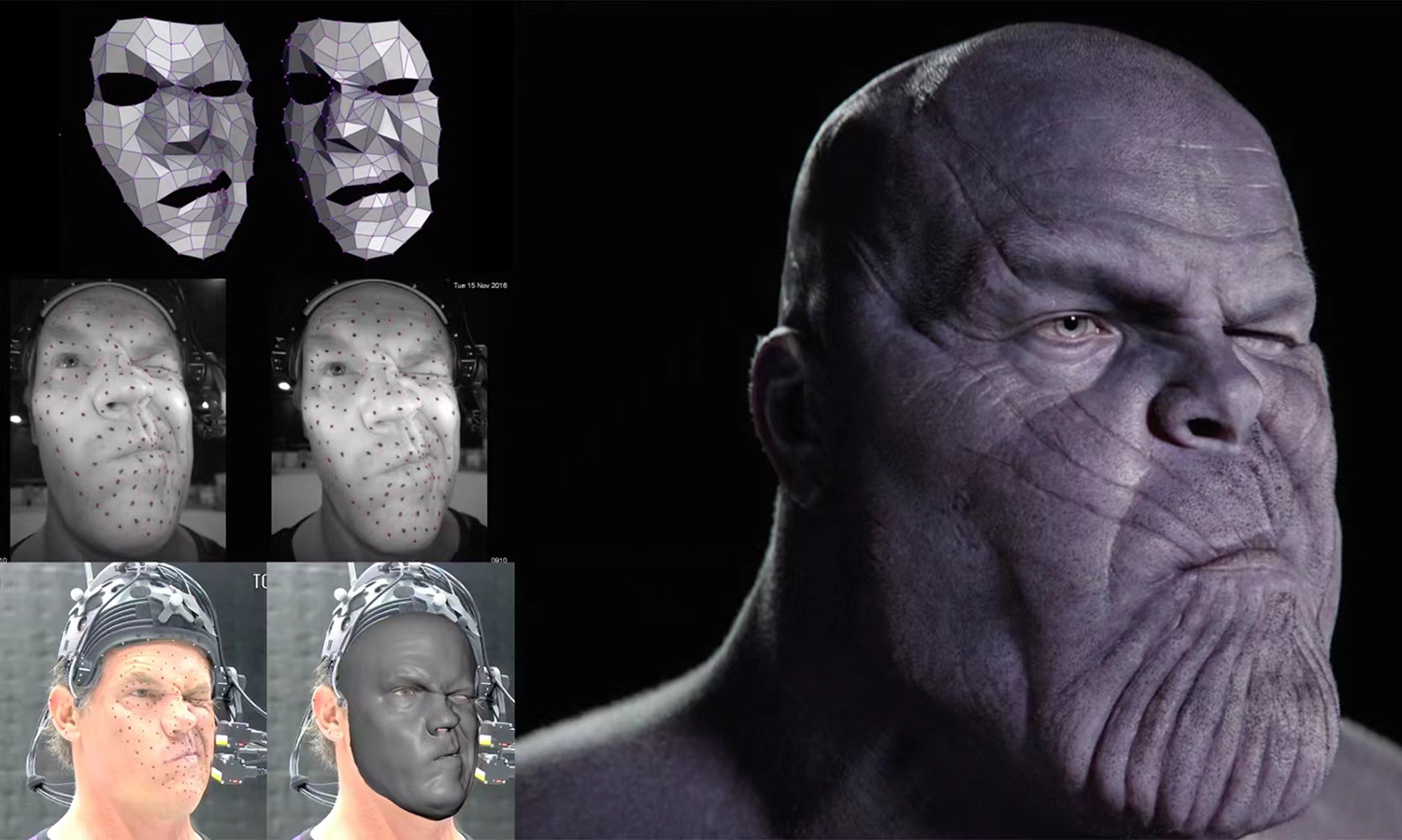

These are hyperrealistic, AI-powered virtual humans capable of speaking, moving and responding in real time. Using the advanced motion-capture and rendering techniques that transformed Hollywood visual effects, the company now builds digital personalities that appear on screens and in physical environments—serving in media, education, retail and even public services.

One of its most visible projects is “Aida”, the AI-powered presenter who delivers nightly weather reports on the Radio Television Hong Kong (RTHK). Another initiative, now in testing, will soon feature AI-powered concierges greeting travelers at airports, able to communicate in multiple languages and provide real-time personalized services. Similar collaborations are under way in healthcare, customer service and education.

“What’s exciting,” says Wong, “is that our technologies amplify human capability, helping to deliver better experiences, greater efficiency and higher capacity. AI-powered virtual humans can interact naturally, emotionally and in any language. They can help scale creativity and service, not replace it.”

To make that possible, Digital Domain has designed its system for compatibility and flexibility. It can connect to major AI models—from OpenAI and Google to Baidu—and operate across cloud platforms like AWS, Alibaba Cloud and Microsoft Azure. “It’s about openness,” says Wong. “Our clients can choose the AI brain that best fits their business.”

Establishing a permanent R&D base in Hong Kong marks a turning point for the company—and, in a broader sense, for the city’s technology ecosystem. With the support of the Office for Attracting Strategic Enterprises (OASES) in Hong Kong, Digital Domain hopes to make the city a creative hub where AI meets visual arts. “Hong Kong is the perfect meeting point,” Wong says. “It combines international exposure with a growing innovation ecosystem. We want to make it a hub for creative AI.”

As part of this effort, the company is also collaborating with universities such as the University of Hong Kong, City University of Hong Kong and Hong Kong Baptist University to co-develop new AI solutions and nurture the next generation of engineers. “The goal,” Wong notes, “is not just R&D for the sake of research—but R&D that translates into real-world impact.”

The collaboration with OASES underscores how both the company and the city share a vision for innovation-led growth. As Peter Yan King-shun, Director-General of OASES, notes, the initiative reflects Hong Kong’s growing strength as a global innovation and technology hub. “OASES was set up to attract high-potential enterprises from around the world across key sectors such as AI, data science, and cultural and creative technology,” he says. “Digital Domain’s new R&D center is a strong example of how Hong Kong can combine world-class talent, technology and creativity to drive innovation and global competitiveness.”

Digital Domain’s story mirrors the evolution of Hong Kong’s own innovation landscape—where creativity, technology and global ambition converge. From the big screen to the next generation of intelligent avatars, the company continues to prove that imagination is not bound by borders, but powered by the courage to reinvent what’s possible.